Abstract

We present SWE-QA, a comprehensive dataset and benchmark for evaluating multi-hop code comprehension in real-world software projects. The dataset contains 9,072 multiple-choice questions generated from 12 popular Python repositories selected from SWE-bench, designed to test complex code understanding capabilities that require reasoning across multiple code entities and files.

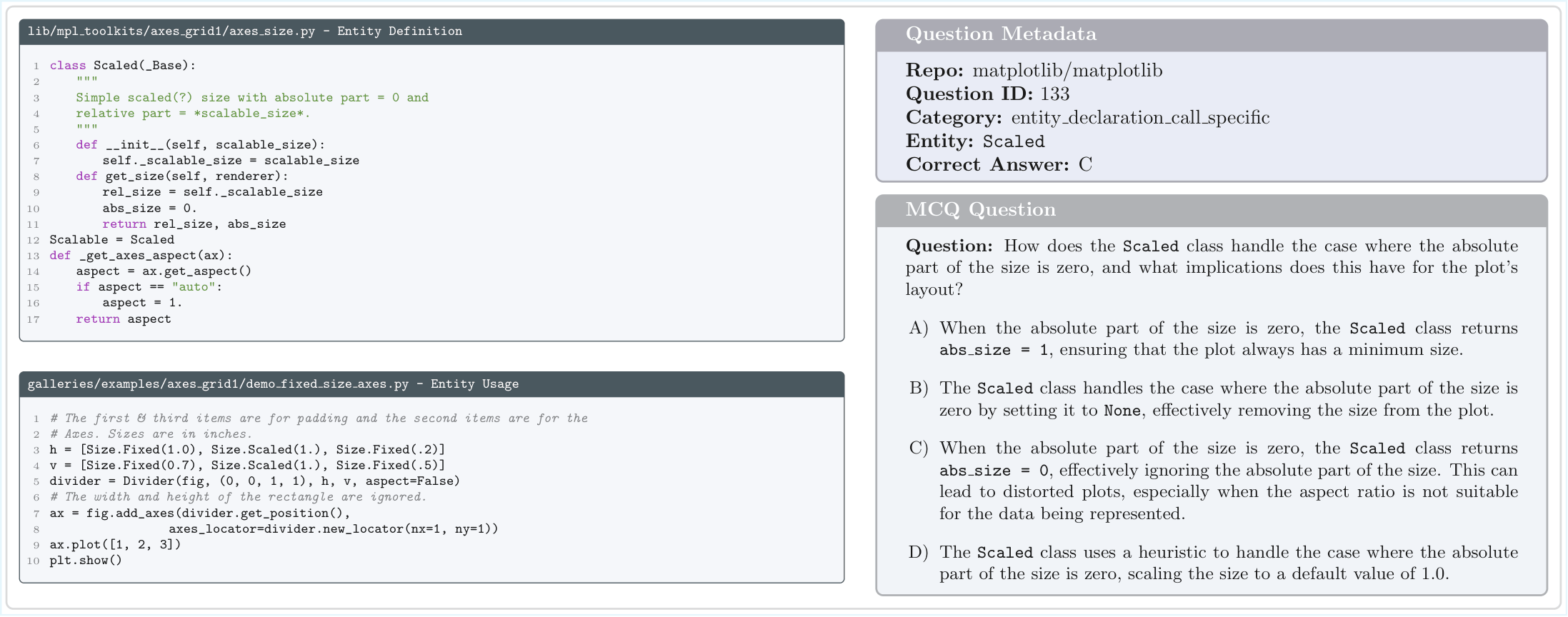

Our benchmark evaluates two key aspects of code comprehension: Declaration-and-Call (DC) reasoning, which tests the ability to connect entity declarations with their usage, and Interacting Entities (IE) reasoning, which assesses understanding of how multiple code entities interact within a codebase.

Paper

Our paper has been accepted at LREC 2026. Read the full paper for detailed methodology, experimental results, and analysis.

Dataset

The SWE-QA dataset is designed to evaluate multi-hop code comprehension with two question categories

and two experimental settings for comprehensive evaluation. The dataset is available on

🤗 Hugging Face

and can be loaded directly using the datasets library.

9,072

Multiple-choice questions

12

Python repositories from SWE-bench

2

Question categories (DC & IE)

2

Experimental settings

Dataset Components

- Oracle Setting (mcq_dataset.zip) - 9,072 questions with only relevant code chunks, testing pure comprehension ability without noise

- Noisy Oracle Setting (mcq_dataset_with_distractors.zip) - Same questions with additional distractor chunks, simulating realistic code retrieval scenarios

- Code Repositories (repos/) - Processed code chunks and entity metadata for all 12 repositories

Question Categories

- Declaration-and-Call (DC) - 4,584 questions testing the ability to trace from entity declarations to their usage or vice versa

- Interacting Entities (IE) - 4,488 questions evaluating understanding of interactions between multiple code entities

Repositories Included

Dataset Structure & Usage

Each question in the dataset includes formatted code chunks with metadata, a natural language question, four multiple-choice options, the correct answer, category label, and repository information.

Quick Start with Hugging Face:

from datasets import load_dataset

# Load the oracle setting

dataset = load_dataset("lailaelkoussy/swe-qa", split="oracle")

# Load the noisy oracle setting

dataset_noisy = load_dataset("lailaelkoussy/swe-qa", split="noisy_oracle")For detailed JSON schemas and usage instructions, please see the Hugging Face dataset card or the Dataset README in the main branch.

Citation

If you use this dataset in your research, please cite our paper:

@inproceedings{elkoussy2026sweqa,

title={SWE-QA: A Dataset and Benchmark for Complex Code Understanding},

author={ElKoussy, Laila and Perez, Julien},

booktitle={Proceedings of LREC-COLING 2026},

year={2026}

}Code & Repository

The complete dataset, paper PDF, and documentation are available in the

main branch

of our GitHub repository. The repository uses branch separation: the main branch contains all datasets and documentation,

while the gh-pages branch (this site) provides an interactive project page.